Optimisation of customer support with Deep Learning

Many people who are far from the world of AI have heard about Large language models such as GPT-3 from Open AI.

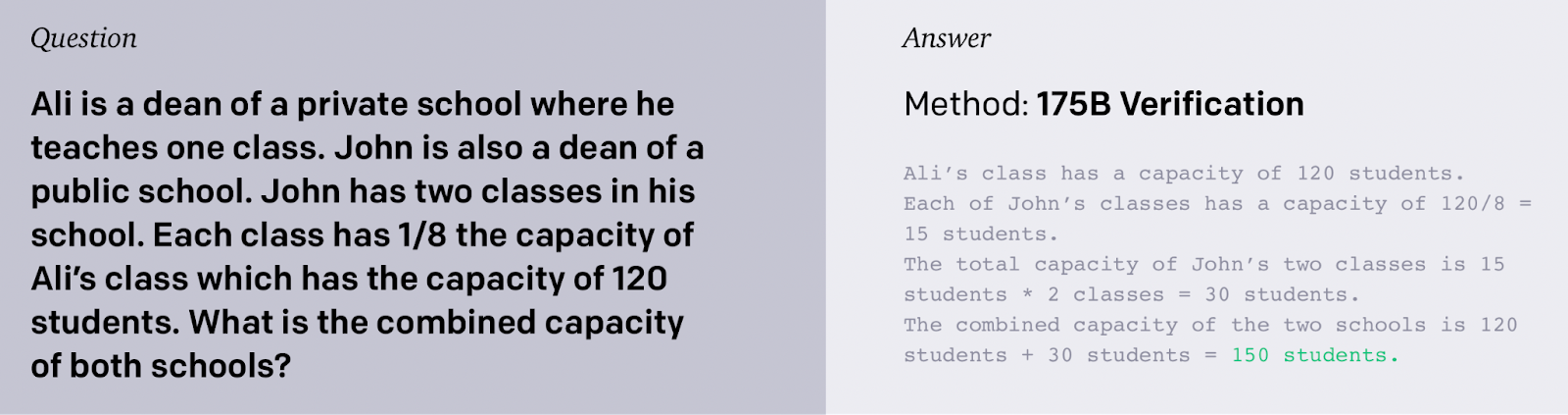

In many ways, unfortunately, this is due to scandals like the one evolving a Google engineer who said that the LaMDA language neural network has consciousness. Also well-known the solving of math tasks

and explaining jokes with the GPT-3 neural network.

However, when it comes to commercially successful business implementations, not many cases come to mind. Chatbots in apps don't always answer our questions correctly, and the support team is often too busy to interfere.

Let me try and fill in the gap in the successful implementation of NLP solutions in business processes.

What was the problem?

Our task was to optimize customer support processes in a big public service organization in Lithuania.

The organization received thousands of questions on 10 different topics. Since there are a large number of foreigners in Lithuania who do not speak Lithuanian, questions were received in English, Ukrainian, Russian.

As a rule, the questions were quite voluminous, so simple chat bots, working on keywords, could not help. This led to repeated answers to the same questions, duplicating tasks that required regular translation and searching for answers in the knowledge base.

Our task was:

1) Simplifying the search for the correct answer, regardless of the language. Data reuse;

2) Increasing the speed of the answer while reducing the burden on staff;

3) Automation of statistics.

How did we solve it?

To complete the task, we used an NLP model based on the most recent for 2022 autoencoder architecture, but with our own modifications.

One of the main advantages is that no data labeling is required to train the model. The neural network extracts all knowledge from existing data.

We trained the model on historical question-answer pairs translated to English. We translated all the dataset to English, because NLP models in English are more accurate. The annual volume of questions was enough to take into account seasonal questions and collect sufficient statistics on various topics.

As a result, when an inquery was received (regardless of the language), the model showed similar historical inqueries (already in the language more convenient for the employee) and historical answers to them. This allowed us to significantly reduce the response time to the question. In 70% of cases, the previously provided answer were completely suitable to answer a new question.

The client was still communicating with a person, which practically guaranteed an adequate response, but the support employee did not have to look up the answers in the knowledge base.

This was especially useful for new employees, they always had a “senior colleague” with all the experience of previous issues.

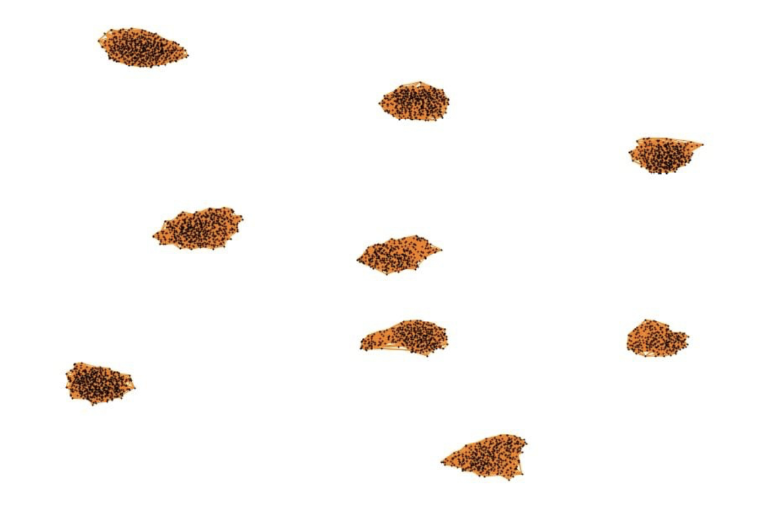

One more advantage was the automatic classification of questions by topic. This was important for tracking the dynamics of questions on various topics by region and, in some cases, taking into account specific addresses mentioned in questions (when it came to complaints about the quality of services).

Here you can see a picture representing the clasterization of inquiries. One dot stands for one question. The dots are automatically decomposed by the model into 10 groups.

Results

The average speed of answering a question has decreased by more than 2 times, the time spent on preparing reports has decreased by 4 times.

This model can be applied not only to government agencies, but also to almost any organization where interaction with a large number of customers via email is required.

We will be happy to discuss how to solve your problems in the field of customer support using AI.

Read also our article about analyzing customer reviews, where our neuromodel understands when cold coffee is good and when it's bad.

We also developed a tool to measure creativity using NLP methods - check how creative you are.